Beyond ChatGPT: What Students Should Really Learn About AI Systems

Artificial Intelligence has officially entered the classroom conversation—and for good reason. Tools like ChatGPT have made AI visible, accessible, and undeniably powerful. Students can now generate essays, brainstorm ideas, and even write code in seconds.

But here’s the reality: learning to use ChatGPT is not the same as understanding AI.

If we want to truly prepare students for the future, we need to go far beyond prompt typing. We need to teach them how AI systems actually work, how to think critically about them, and how to use them responsibly in a rapidly evolving world.

1. AI Is More Than a Tool—It’s a System

Many students interact with AI as if it’s a “magic answer machine.” Ask a question, get a response. But behind every AI tool is a complex system built on:

- Data (what the model learns from)

- Algorithms (how it processes information)

- Models (how it generates outputs)

Students should understand that AI:

- Does not “know” things the way humans do

- Relies on patterns, not true understanding

- Can reflect biases present in its training data

Why it matters:

Without this foundational knowledge, students risk becoming passive users rather than informed thinkers.

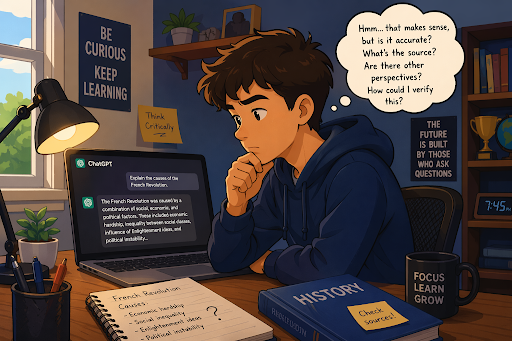

2. Prompting Is Just the Beginning

Prompt engineering is often marketed as the must-have AI skill—and it is valuable. But it’s only one piece of a much bigger picture.

Students should also learn:

- How to evaluate AI-generated responses

- How to refine outputs through iteration

- When not to rely on AI

A well-written prompt can produce a convincing answer—but not necessarily a correct one.

Real skill: Knowing how to question the output, not just generate it.

3. Bias, Ethics, and Responsibility

AI systems are not neutral. They are shaped by the data they’re trained on and the humans who design them.

Students need to explore:

- Algorithmic bias and fairness

- Ethical use of AI (especially in academics)

- Privacy and data security

- The impact of AI on society and jobs

For example, AI-generated media, voice cloning, and deepfakes are already influencing public perception and personal safety.

Future-ready students aren’t just tech-savvy—they’re ethically aware.

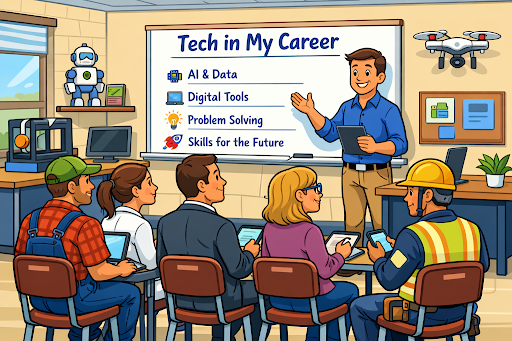

4. Real-World Applications of AI

AI is no longer confined to tech companies. It’s transforming every industry:

- Healthcare (diagnostics, patient data analysis)

- Agriculture (predictive farming, automation)

- Business (data-driven decision making)

- Education (personalized learning systems)

Students should explore how AI connects to careers they care about—not just coding, but context.

Key takeaway: Every career is becoming a tech career.

5. Human Skills Still Matter—More Than Ever

Ironically, as AI becomes more powerful, human skills become more important.

Students should be developing:

- Critical thinking

- Creativity

- Communication

- Problem-solving

- Adaptability

AI can generate content—but it cannot replace human judgment, empathy, or originality.

The goal isn’t to compete with AI—it’s to collaborate with it.

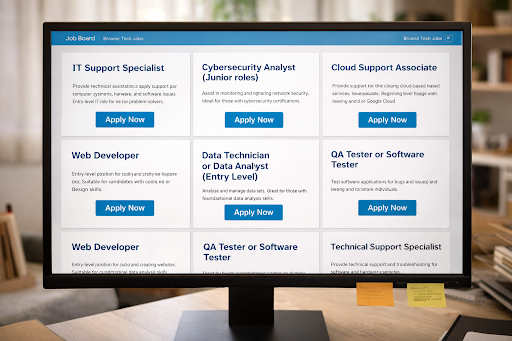

6. Building, Not Just Using

The most impactful learning happens when students move from consumers to creators.

That could include:

- Training simple machine learning models

- Exploring how datasets influence outcomes

- Building AI-powered projects

- Understanding APIs and integrations

Even at a basic level, this shifts their mindset from “What can AI do for me?” to

“How can I design solutions using AI?”

Final Thoughts: From Users to Thinkers

AI tools like ChatGPT are a great entry point—but they should never be the finish line.

If we stop at tool usage, we prepare students for today.

If we teach systems thinking, ethics, and application, we prepare them for tomorrow.

The future doesn’t belong to the students who can simply use AI.

It belongs to those who understand it, question it, and shape it.

If you’re building programs, curriculum, or classroom experiences around AI, this is the shift that matters most:

Don’t just teach students how to prompt.

Teach them how to think.